The best gaming TV 2025: my top high-spec living room screens

My best TV picks for fantastic gaming visuals.

So, you're thinking of grabbing the best gaming TV out there. Great! But where should you start? Well, I've been using TVs to play games all my life (seriously, I started when I was four with a knock-off Atari plug-and-play console), so I can point you towards the exact display that will fit your specific needs.

Objectively, there is no single best gaming TV out there, but the one I'd say is the most impressive right now overall is the LG OLED G4. It's a super vibrant OLED display with super speedy 144Hz capabilities and is superbly bright, not to mention it benefits from the company's premium MLA+ (Micro Lens Array) tech. However, I could be swayed to point more of you towards the newer LG OLED G5 once it drops a little in price since it ramps things up to 165Hz and uses a new "four stack" Tandem RGB panel for better brightness and colors.

But hey, I know most of you aren't looking to spend thousands on the best gaming TV. So, rather than just declaring one winner and calling it a day, I've tested a whole host of displays at different price points that will be fantastic for consoles and kicking back to watch movies. Depending on your budget, you'll be able to upgrade to a newer mini LED or OLED panel with dazzling capabilities, but good old LED is also still around if you want to keep things super cheap.

The Quick list

An premium OLED powerhouse that pairs perfectly with consoles and PC.

More affordable than the OLED G3, but still packs a punch.

Read more below

For under $500, this Hisense panel is exceptional value for money.

Read more below

Also one of the top OLED TVs out there, this is an example of Samsung's best.

Read more below

One of the best Sony gaming TVs, this mini LED panel offers surprising contrast that's almost on par with OLED.

Read more below

Samsung's QLED panel caters to gamers with great features, striking fidelity, and heroic HDR.

Read more below

Load the next product ↓

A glimpse into the future, this 8K Samsung set has more to offer than resolution.

Read more below

Phil has been testing the best gaming TVs for years, offering up advice on models worth buying for specifically for gaming both in reviews and in a physical store. They also have a love for screens beyond the latest OLED and mini LED panels, as they have a collection of televisions that ranges from old CRTs for retro gaming to excellent smaller panels that offer up plenty of ports.

April, 2025 - Ahead of more gaming TV reviews in 2025, I've checked over my current picks to ensure each model is still the right fit for its category and available at retailers. I've also expanded the "how to choose" section with up-to-date information and added some context surrounding OLED screen burn and new 165Hz refresh rate models.

The best gaming TV

Specifications

Reasons to buy

Reasons to avoid

There's a new gaming TV champion in town, and the LG OLED G4 builds upon everything that made last year's OLED G3 model so great. It's admittedly one of the priciest panels around, but investing in this monstrous display will grant you faster visuals, deliciously deep blacks, and incredible HDR results across the board.

✅ You want the best: The 2024 G4 is one of the best TVs you can buy right now, and it'll offer up more features and better performance than most other screens.

✅ You've got a new gen console: This flagship goes above and beyond with an 144Hz refresh rate, so even future consoles will benefit from its speed.

✅ You prefer bigger screens: With sizes spanning all the way up to 83-inch, you'll be able to easily create a cinema experience at home.

❌ You'd prefer to spend less: There are far cheaper TVs out there that are still worth a look, even if they can't quite reach the glory of the G4.

❌ You need a stand: The larger G4 models don't actually come with a stand, so wall mounting is essential.

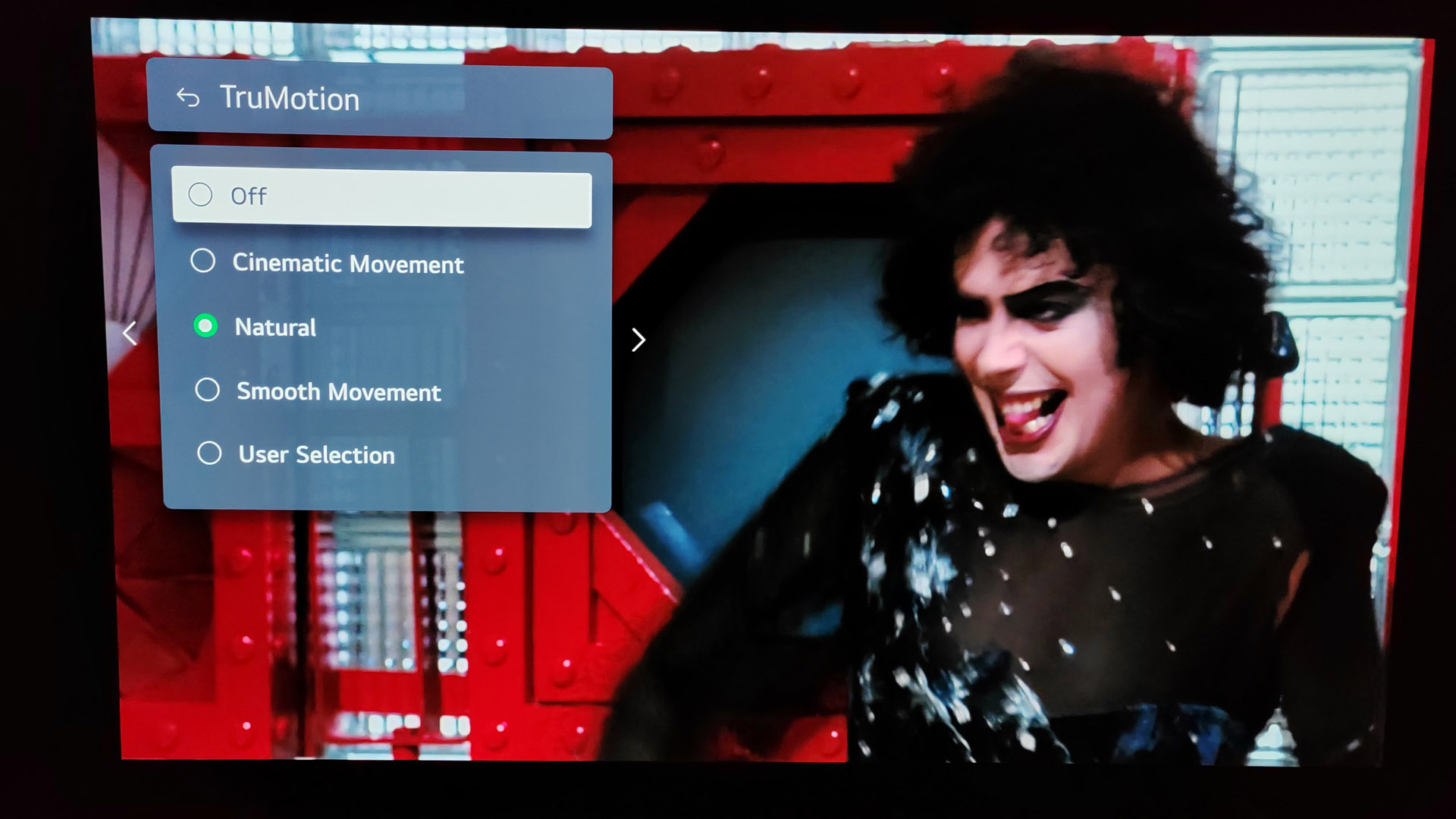

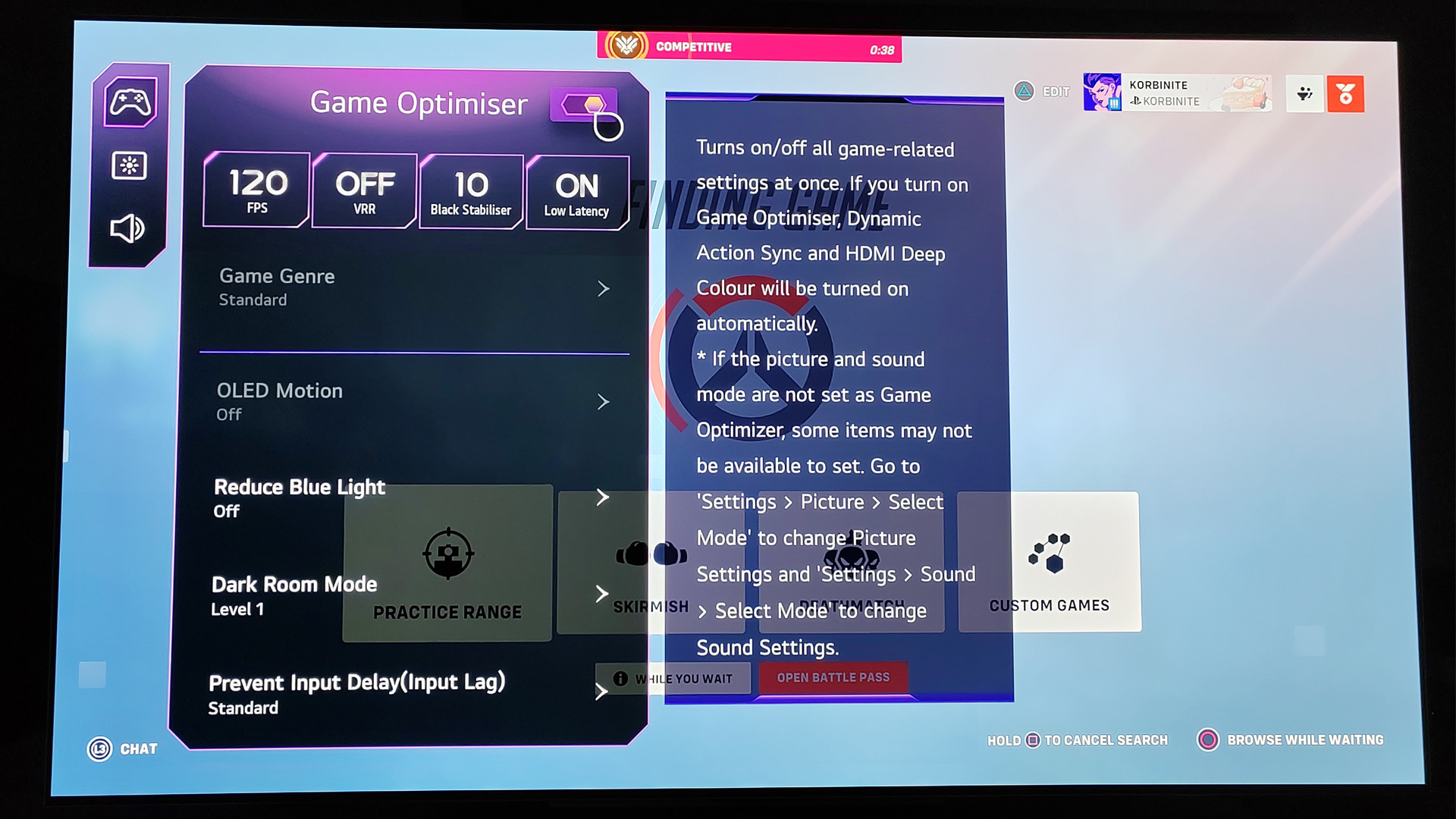

Features: The OLED G4 is undeniably feature heavy, boasting an 144Hz panel laced with HDR10 and Dolby Vision as well as handy PC features like Nvidia G-Sync and AMD FreeSync. Those latter perks make even more sense now that LG's flagship TV can put some UHD gaming monitors to shame, not to mention you'll still have all the usual smart options to hand via the Magic Remote. There's even a gaming hub that'll condense everything all the options you'll need, like VRR and latency settings, into one continent quick access widget, something that makes it deserving of gaming TV status.

Design: Not much has changed in terms of design compared to the G3, with sizes still spanning up to a whopping 83-inches. However, LG arguably nailed its approach the first time with a sleek aesthetic that incorporates cable management into its ultra thin chassis. Just keep in mind that models above 65-inch won't come with a stand, meaning you'll either have to wall mount or find a suitable third-party stand. That's a bit of a pity, as the rectangle pedestal included with the 'smaller' models is pretty attractive compared to previous iterations.

Performance: Again, the OLED G4 takes what the G3 model introduced last year, namely Micro Lens Array (MLA) tech, and provides an incredible visual experience in both gaming and films. In the background, you've got a new Alpha 11 AI processor ensuring you get the absolute best results when viewing HDR content or boosting fps using a PC, PS5 or PC, and the effort really pays off.

In testing, PlayStation favorites like Spyro the Dragon: Reignited trilogy and new PC games like Still Wakes the Deep benefited from elevated contrast and colors, while 4K Blurays like Prey put both streaming services and even Cinema screens to shame thanks to this display. It's pretty hard to believe that TVs in 2024 can pull off blisteringly fast speeds and high fidelity visuals, but the G4 certainly manages it.

Verdict: The new LG OLED G4 isn't an approachable TV in terms of price, but the visual experience it provides does live up to its premium tag. It's important to have high-spec screens to establish a bar for others to reach, and this flagship sets a new living room standard that will benefit both PC and console players tenfold.

Read more: LG OLED G4 review

Value | ★★★★☆ |

Screen quality | ★★★★★ |

Speed | ★★★★★ |

Software | ★★★★☆ |

Connectivity | ★★★★★ |

The best gaming TV for most people

Specifications

Reasons to buy

Reasons to avoid

The LG OLED C3 and it's brand new C4 successor are pretty similar, so we think last year's version is ultimately the model worth buying. Don't get us wrong, it's still pretty expensive, but there's a massive gap between both the 2024 screen and G-series panels like the G3 and G4.

✅ You want to spend a bit less: It's not a budget option by any means, but the C3 costs substantially less than its beefier OLED G3 sibling.

✅ You're want 4K 120Hz: Just like the G3, the OLED C3 is a 4K 120Hz display, so your PS5, Xbox, and gaming PC will benefit from its higher refresh rate.

✅ You're looking for OLED: If you're hellbent on going for OLED over other panel types, the C3 isn't going to disappoint.

❌ You're on a tight budget: The C3 might be cheaper, but it still comes with a premium price tag that's a chunk above non-OLED rivals.

❌ You want superior HDR: The C3 isn't an HDR heavy hitter, and while results will vary based on your chosen content type and device, you'll want to opt for the G3 if you're seeking perfection.

Features: The 42 inch LG OLED C3 we tested is the smallest of the bunch, and is aimed at movie fans looking for a bijou telly box and gaming enthusiasts looking for a large desktop TV display. The 42-inch C3 offers various picture pre-sets, with Cinema and Cinema Home modes delivering a more theatrical performance and enhanced colour saturation. Motion handling is excellent, with effective options to reduce judder without sacrificing image quality.

Powered by the latest Alpha 9 Gen 6 processor, this screen maintains a high level of AI sound and vision management, but it lacks the brightness boosting algorithms found in larger models. Still, it delivers impressive picture quality with superb fine detail, punchy contrast built from inky blacks, and vibrant colours.

Design: The C3 is without a doubt one of the best looking gaming TVs around. Its minimal border and dark metal finish provide a slick aesthetic that feels genuinely premium, and G3's lower spec sibling even comes with a stand. The 42-inch model trades out a pedestal for two widely spaced feet, which may or may not appeal to you depending on the size of your TV bench or desk.

Performance: The set’s HDR performance is solid, although it lacks support for HDR10+, the preferred HDR standard of Prime Video. On the sound front, the TV's downward firing speakers are functional but may benefit from a soundbar upgrade.

We think the TV excels as both an everyday screen and a near-field gaming display, thanks to universal 4K 120Hz HDMI support, VRR and ALLM coverage, and a dedicated game interface. Support for Freesync Premium and NVIDIA G-Sync VRR, make it suitable for high frame rate gaming. Latency is good. We measured input lag at 13.1ms (1080/60).

Verdict: While not as bright as the larger (55-inch upwards) C3 models, the LG OLED42C3 TV still provides top-notch picture quality, extensive gaming features, and a sleek design. Unfortunately, the high price tag might limit its overall appeal, but it's still one of the best 120Hz 4K TV options out there that costs less than the mighty G3.

Read more: LG OLED C3 review

Value | ★★★★★ |

Screen quality | ★★★★☆ |

Speed | ★★★★☆ |

Software | ★★★★☆ |

Connectivity | ★★★★☆ |

The best gaming TV under $500

Specifications

Reasons to buy

Reasons to avoid

The Hisense A6G is an impressive gaming TV with a fabulous price tag, and for under $500, you'll get an exceptional screen that excels at the basics. Sure, it's not going to wow you with its 60Hz refresh rate, nor does it stand a chance against OLED and QLED titans dominating the scene right now. However, if you merely want a functional TV that both looks the part and comes in big sizes, this is it.

✅ You're on a strict budget: The A6G balances specs and price perfectly, but still still offers features that'll benefit PS5 and Xbox players alike.

✅ You can live without 120Hz: Not everyone is going to make full use of a faster refresh rate, and 60Hz is still completely fine in most casual console scenarios.

✅ You want bigger for less: The 65-inch model specifically costs under $500, and that's a lot of TV for the money.

❌ You play FPS games: Playing shooters like Overwatch 2 at 120Hz makes a difference, and 60Hz may feel sluggish by comparison.

❌ You need brighter HDR: We weren't expecting the world from the A6G's HDR abilities, but it was a little dim during testing.

Features: Again, we're paddling in budget waters here, so it's only natural that the Hisense A6G opts for 4K 60Hz over a faster 120Hz refresh rate. Yet, this UHD screen isn't completely devoid of nice to have extras, as it supports both ALLM (Auto Low Latency Mode) and VRR (Variable Refresh Rate), which are handy if you've got a PS5 or Xbox Series X.

All the usual port suspects are located at the size, including three HDMI, Ethernet, optical out, and USB 3.0. Of course, it's also got Wi-Fi connectivity if you fancy using this smart TV as, well, a smart TV, and Hisense's Vidaa platform will provide you with access to whatever streaming service you're paying for at the moment. The US iteration has Android TV with Chromecast built-in, but since we're in the UK, we can't comment on how it compares to the company's in-house solution.

Design: Hisense's value TV uses a combination of slim bezel and spaced-out feet to provide a modern look that doesn't scream under $500. In terms of connections, you'll find three HDMIs on the rear, but the ports won't enable your console to hit 4K 120Hz support. We are paddling in budget waters here, so 60Hz is to be expected, but ALLM (Auto Low Latency Mode) and VRR (Variable Refresh Rate) support is present.

Performance: Considering we're talking about a TV that's under $500, the A6G produces excellent fine detail and reasonable dynamics. Dolby Vision helps a lot, effortlessly making the set shine with Dolby Vision shows.

We found the motion handling is accomplished too: 60Hz MEMC (Motion Estimation Motion Compensation) interpolation, presented in a variety of strengths, works well for general TV and sport. Hisense claims an input lag of better than 20ms, and while we achieved a slower 48.2ms (1080/60) result with Game mode selected during our testing, it's still pretty snappy.

Verdict: Ultimately, the Hisense A6G is the best cheap gaming TV we've used, and it's the first screen that pops to mind when recommending budget options. The lack of 120Hz is a bummer, and perhaps one day it'll become a standard refresh rate across the board, but it's an easy omission to ignore when you're getting 65-inches for under $500.

Read more: Hisense A6G review

Value | ★★★★☆ |

Screen quality | ★★★★☆ |

Speed | ★★★☆☆ |

Software | ★★★★☆ |

Connectivity | ★★★☆☆ |

The best Samsung gaming TV

Specifications

Reasons to buy

Reasons to avoid

The Samsung S95B is a gaming TV that packs a QD-OLED punch, but its also one of the most eye catching screens you can buy. The display is pretty much a testament to the tech giant's abilities as a TV manufacturer, and there's not much else you could ask for in a premium panel.

✅ You need a super bright OLED TV: Boasting consistent brightness and exceptional blacks, this Samsung QD-OLED TV is a game changer.

✅ You appreciate smart features: Samsung's Tizen smart platform is pretty comprehensive and feeds into the brand's SmartThings ecosystem.

✅ You're looking for low latency: This 4K 120Hz screen provides exceptional low latency visuals, making it a nice fit for shooters and fast paced fighting games.

❌ You need something bigger: The biggest model you can grab is 65-inch, which may deter players looking for monstrous screen sizes that'll fill a wall.

❌ You'd rather not risk burn in: This applies to all OLED models out there, but it's worth keeping in mind if you prioritise longevity over screen quality.

Features: The Samsung S95B's QD-OLED panel combines characteristic OLED black levels with the high peak brightness and the expanded colour volume of Quantum Dot technology, making it a brilliant choice if you prefer to use your TV in a room with high levels of ambient light.

Just like other premium displays, the S95B supports 4K 120Hz, and it's included across all four of its HDMI inputs alongside VRR (Variable Refresh Rate). PC gamers will also be able to take advantage of Nvidia G-Sync and AMD FreeSync, with ALLM (Auto Low Latency Mode) helping to keep everything snappy regardless of your platform.

Design: Samsung refers to the S95B as 'lazer slim', and that doesn't really feel like an exaggeration. In fact, we were a little nervous handling it while testing, as it felt a bit fragile due to its wafer thin body. Thankfully, its metal back provides rigidity without adding bulk, which preserves the whole minimalist aesthetic the S95B is going for.

Performance: Simply put, the Samsung S95B's image quality is spectacular. The level of detail is excellent, and its HDR performance is remarkable. We're talking HDR brightness over 1400 nits, which isn't going to happen with a standard OLED setup. Sadly, there’s no Dolby Vision support present, but you do get HLG, HDR10, and HDR10+ compatibility. It’s not just peak HDR brightness which glows, as the set’s average picture level is high and this makes it easy to view in bright rooms. This can cause fatigue, especially if you're not used to this sort of screen, and even the Game mode looks overwrought. On the plus side, input lag is low in Game mode (we measured it at 9.6ms) not to mention its 4K 120fps abilties are buttery smooth.

Verdict: All things considered, the Samsung S95B is a highly impressive QD-OLED debut. Its peak brightness is phenomenal, and colour depth is high. It never looks particularly cinematic though, and even in Game mode, pictures can seem over-saturated. Some will love the presentation though, and it's a great gaming TV and could set the scene for a new section of the best gaming TV market to come henceforth.

Read more: Samsung S95B review

Value | ★★★★☆ |

Screen quality | ★★★★★ |

Speed | ★★★★☆ |

Software | ★★★★☆ |

Connectivity | ★★★★☆ |

The best mini LED gaming TV

Specifications

Reasons to buy

Reasons to avoid

The Sony XR-75X95K is a fantastic example of a mini LED TV that trades blows with even the best panel types around. Superb brightness and excellent detail help earn this high spec screen a place on this list, and it's an excellent addition to the TV maker's 2023 portfolio.

✅ You've got a PS5: The XR-75X95K is included in Sony's 'Perfect for PlayStation 5' ecosystem, granting it access to features like auto HDR tone mapping and auto genre modes.

✅ You're looking great contrast: Sony's mini LED tech holds up against its OLED competition, and it's brightness helps HDR hit harder.

✅ You're after great audio: If you're looking for speakers with a bit of oomph, you'll be pleased with the XR-75X95K's Dolby Atmos capabilities.

❌ You need a slimmer TVs: Despite using mini LED tech, the XR-75X95K is pretty chonky, and it weighs more than you'd expect too.

❌ You require super sharp motion: Motion occasionally looks soft during fast paced scenes, and it might spoil your experience if you tend to notice subtle differences.

Features: The XR-75X95K is Sony’s first ever TV to deploy Mini LED technology - a system where using much smaller LED backlights allows far more of them to be squeezed into the TV’s 75-inch screen, delivering potentially more brightness and, even more importantly, finer light controls.

Controls are backed up by an impressive 600 separately controllable dimming zones. The cutting-edge screen technology is backed up by support for 4K/120Hz gaming, VRR and Dolby Vision HDR - though the two gaming-specific features here only work across two HDMIs, not all four.

Design: The XR-75X95K is a bit beastly. as Its sides and back are chonkier than you'd think given its a mini LED set. It's notably heavier to lift than modern OLED alternatives too, but that's not necessarily a bad thing given that it helps it feel studier build quality wise, Just don't try to set it up on your own, as its weight will catch you off guard.

Round the back, the TV features a pleasing grid pattern that's reminiscent of old Sega Mega Drive (Genesis artwork). This is perhaps unnecessary given you won't be looking round there that often, but it still adds to the experience. We're also grateful for the screen's narrow chrome feet, as they provide sturdiness while enabling the display to fit on most benches and desks.

Performance: You’d never guess this was Sony’s first Mini LED rodeo from its picture quality. Immediately we were struck by how bright and colourful its images looked with both gaming and video sources, with its brightness, in particular, pushing comfortably beyond anything OLED screens can currently achieve. This ensures HDR pictures in particular enjoy spectacular lifelike intensity and richness without compromising subtle details.

Black levels and backlight controls are mostly excellent by LCD TV standards too (if you can avoid watching from a wide angle, anyway), while the potent visuals are joined by a powerful, detailed and dynamic audio performance that rounds out the TV’s cinematic credentials perfectly. Occasional softness when showing motion and minor ‘flatness’ with mid-dark imagery don’t even come close to stopping the 75X95K from being overall an outstanding TV for its money.

Verdict: We reckon the Sony XR-75X95K exemplifies the company's TV expertise, and its a great example of what mini LED can achieve. It's going to facilitate excellent fidelity and brightness when watching movies and playing PS5, but the latter benefits from auto HDR tone mapping and auto genre picture mode thanks to the company's 'Perfect for PS5’ initiative.

Read more: Sony XR-75X95K review

Value | ★★★★☆ |

Screen quality | ★★★★☆ |

Speed | ★★★★☆ |

Software | ★★★★★ |

Connectivity | ★★★☆☆ |

The best QLED gaming TV

Specifications

Reasons to buy

Reasons to avoid

If it's the best QLED TV you're after, the Samsung QN90A is an excellent option. Evolving its already-brilliant QLED panel tech the QN90A, we found that this Mini-LED-powered 4K flagship has deep blacks, terrific quality, vibrant colours and contrasts, and exquisite HDR management.

✅ You're looking for the best QLED: Samsung's blend of QLED and mini LED tech is sublime, providing colors, contrast, and HDR that rivals OLED.

✅ You want a wall mounted TV: Thanks to its One Connect box housing bulky IO, the QN90A won't stick out much when wall mounted.

✅ You want 4K 120Hz: Samsung's thin panel has a refresh rate that'll match your new gen console's max abilities, all while providing crisp 4K visuals.

❌ You don't have a lot of space: The QN95A itself is thin, but you'll have to make room for its additional one connect box.

❌ You want Dolby Vision: This pricey screen lacks Dolby Vision support, so if you're a fan of the tech, you may have to shop around for an alternative.

Features: The Samsung QN95A comes with one of company's One Connect Boxes, which connects to the set via a fibre optic cable. The unit includes four HDMI 2.1 connections. meaning you'll be able to hook up a PS5, Xbox Series X, gaming PC, and something like a Steam Deck dock without sacrificing output specs.

Smart connectivity is provided by Tizen, Samsung’s smart TV platform and there’s a wide range of apps available, including Netflix, Prime Video, Apple TV+, Disney+, and Now, plus all the usual catch-up TV services. We are really excited about the new Game Bar feature, too. This is a dedicated interface for tweaks and adjustments that makes for excellent customisation and tinkering.

Design: Thanks to the aforementioned One Connect Box, the Samsung QN95A is only 25.9mm thick. That's a huge boon for anyone looking for a flush wall mounted TV, as you won't need to disguise girth using a recess. It's also virtually got no frame, resulting in something that looks like a bezel-less portal to another realm. You won't even have to wall mount it to gain this effect, as its pedestal stand is so subtle that its virtually unnoticeable.

Performance: Simply put, we found the image quality is superb, thanks to an advanced AI-powered Neo Quantum 4K processor, while an Intelligent Mode optimises all sources, making it an easy screen to live with, whatever you watch, and whatever you prefer. Even its integrated sound system has improved, thanks to Samsung’s OTS+ tech being included.

Verdict: Overall, the QN95A is one of the best QLED models Samsung has created, and it proves the worth of mini LED tech too. The One Connect box setup won't be for everyone, and its missing some nice to have features like Dolby Vision. Nevertheless, we're huge fans of this killer 4K screen, external boxes and all.

Read more: Samsung QN95A review

Value | ★★★★☆ |

Screen quality | ★★★★★ |

Speed | ★★★★☆ |

Software | ★★★★☆ |

Connectivity | ★★★☆☆ |

The best 8K gaming TV

7. Samsung QN900A

Specifications

Reasons to buy

Reasons to avoid

Not everyone is going to appreciate the Samsung QN900A and its 8K abilities. However, if you're eager to try out the future standard, or have a graphics card that can actually output higher than 4K, this screen will happily furnish your eyes with a ridiculously high resolution.

✅ You want to experience 8K: Samsung's UHD screen can pump out twice as many pixels, which will appeal to any of you looking for a glimpse into the future.

✅ You've got a high end PC: It's perhaps not practical performance wise, but if you've got the GPU, you'll be able to try out games in 8K.

✅ You want a thin TV: Just like Samsung's QLED 4K model, the QN900A is incredibly thin thanks to its external One Connect box IO.

❌ You've got no access to 8K content: While the QN95A will upscale to 8K, reaping its true benefits requires the right hardware.

❌ You're not made of money: As you'd perhaps expect, this 8K TV costs a pretty penny, and similar 4K models are available for a chunk less.

Features: The Samsung QN900A earns its place as the best 8K gaming TV thanks to its advanced Mini LED backlight, capable of greater precision than a conventional full-array backlight. HDR support covers regular HDR10 and HLG, along with HDR10+. However, there’s no room for Dolby Vision though, which will disappoint both film fans (it’s the standard HDR offering on Netflix and Disney+) and Xbox owners. The TV's audio is above average, courtesy of Object Tracking Sound Pro. The QN900 actually has ten speakers built into its slim frame. There’s no OTS support for Dolby Atmos, though.

Design: The set looks the part thanks to its ultra-slim Infinity Design, with an ‘invisible’ bezel, the panel is all picture. Just like the Samsung QN95A, this 8K screen uses a One Connect box, meaning you'll connect all your consoles and other devices into an external unit unit, which then uses a single cable to feed the TV.

Performance: The QLED panel within the Samsung QN900A performs pretty similarly to the QN95A, producing exceptional brightness, contrast, and black levels. White it is a true 8K TV, you'll have to stick with 4K to actually reap the benefits of its 120Hz refresh rate, as its HDMI 2.1 capabilities are capped to 60Hz when catering to native resolution. You'll also need to pick up one of the best HDMI cables to ensure you can actually reach those lofty 7680 × 4320 heights, so keep that in mind if you don't have one to hand.

Of course, if you're rocking a graphics card like the Nvidia GeForce RTX 4090 or AMD Radeon RX 7900 XTX, playing games at 8K is actually an option. Whether you'll want to dial back settings to low to maintain a decent frame rate is another matter entirely, you can still brag to your friends that you can your Steam library at double the resolution they can.

Verdict: The world at large might not be ready for 8K, but if you want to get ahead of the curve, the Samsung QN900A will help you achieve beyond 4K. It boasts all the same qualities as similar 4K models too, so you won't have to trade away vital premium features for the sake of resolution. Just keep in mind that using 8K in 2024 still comes at an cost, and this TV costs way more than its 4K equivalent.

Value | ★★★☆☆ |

Screen quality | ★★★★☆ |

Speed | ★★★★☆ |

Software | ★★★★☆ |

Connectivity | ★★★☆☆ |

Also tested

Not every display can occupy a best gaming TV top spot, but there are still plenty of models that are worth considering. Below, you'll find a bunch of tested panels and their respective reviews, as well as a quick verdict based on whether I think they're worthwhile.

Hisense U7K | Check Amazon

Armed with a 144Hz mini LED panel, the U7K still packs a punch for living room PC gaming and offers up tremendous speed for PS5 and Xbox Series X.

Read more: Hisense U7K review

Sony A90K | Check Amazon

A fantastic smaller OLED screen that pairs fantastic with PlayStation 5 thanks to its 120Hz abilities. You're only getting two HDMI 2.1 ports with this display, and its speakers aren't the best, but it's still worth considering at a discount.

Read more: Sony A90K review

LG OLED G3 | Check Amazon

Once crowned our overall favorite, last year's front-runner still packs a 4K 120Hz punch and excellent OLED visuals. One to keep an eye on during the sales while stock is still around.

Read more: LG OLED G3 review

Sony X90J | Check Amazon

It's getting on a bit in terms of age, but the X90J is still an great PS5 screen with 4K 120Hz abilities, especially if you can find it for the right price in 2024.

Read more: Sony X90J review

Samsung Q70T | Check Amazon

A good QLED package, but only if you can get it for far less than newer rival screens. A prime candidate for price cuts if you're looking for 4K 120Hz.

Read more: Samsung Q70T review

LG QNED91 | Check Amazon

LG's take on mini LED tech is worth considering if you're not into OLED screens, but availability and pricing could be an issue in 2024. If it's available and cheap, take full advantage.

Read more: LG QNED91 review

Jargon buster - tricky TV tech terms explained

Why you can trust GamesRadar+

4K

This is the resolution of the image that can be displayed by your TV. 4K refers to the resolution 3840x2160 pixels. It's also referred to as UHD or Ultra HD by some broadcasters or manufacturers. Basically, if a TV can display pictures in 3840x2160 it can be called a 4K TV or 4K ready TV.

HDR

HDR means High Dynamic Range. The majority of 4K TVs come with HDR as standard, and it's a technology used to process colors within games, movies, and TV shows. HDR isn't strictly about contrast - it's a way of making the difference more noticeable between colors (and blacks), and HDR can actually be used by game makers and developers to pick out more details in their creations. Primarily, HDR is used to boost the color of a picture by making colors more vivid, thereby contrasting them further. If you can separate very similar shades of color, then you can create clearer images. The minimum standard for HDR is a brightness of 400 nits (the measure of brightness on a TV), although some TVs manage 2000 nits in 2019.

OLED

This stands for Organic Light Emitting Diode, and it's a type of TV panel. Basically, while LCD and plasma panels require something called back-lighting or edge-lighting to create pictures on screen, OLED panels don't need it. With back-lit or edge-lit TVs, the LEDs in the panel are illuminated in groups or lines to create a picture. With OLED TVs, each LED on screen can be individually lit - switched on or off to create a picture. This is what allows for truer blacks in OLED sets. With the ability to completely switch off each individual LED, you get sharp edges on images and deep blacks because there is no backlight showing through at all.

QLED

This is Samsung's own technology, and it stands for Quantum Dot Light Emitting Diode. Quantum Dots are particles, which are lit to create a picture on the screen, and they can get much brighter than LEDs or QLEDs. This means QLED sets offer brighter colors and better contrasts than any other panel type. The panel is still either back-lit or edge-lit like traditional 4K TVs, and this can make a huge difference when it comes to black levels. Back-lit QLEDs can not only deliver vivid colors, but they can also produce sharp images and blacks that rival premium OLEDs. This makes them perfect for gaming.

Response time

You'll hear a lot about the response time of a panel, especially when discussing gaming TVs. This is basically the speed at which a color can change on your TV (eg. from black to white to black again). Most 4K TVs have response times quicker than we can perceive them, so it makes no real difference to gameplay outside the twitchiest of shooters. However, purists will want a TV with the quickest response time possible.

Refresh rate

This is the speed at which an image can be refreshed on your TV (and shouldn't be confused with response time). Basically, most TVs offer 60Hz-120Hz, although no 4K TV has anything higher and if you want 144Hz or even 240Hz, you need one of the best gaming monitors. A 60Hz 4K TV, for example, refreshes the image on screen 60 times per second, which allows a certain level of smoothness to the image. If the TV refreshes at 120Hz, the image is twice as smooth, and you notice that in how slick the motion appears on screen. Many TVs 'game modes' will boost refresh rate artificially, usually by downgrading other display features (eg. reducing the brightness of your picture).

HDMI

This stands for High Definition Multimedia Interface, and it's the standard connection cable between your 4K TV and most devices. You need at least an HDMI 1.4 cable to carry a 4K signal, although most modern HDMIs are 2.0 cables, capable of carrying 4K signals at 60 frames per second. The majority of modern console games can't display at 4K 60fps, so as long as you have a 2.0 cable and 2.0 port on your TV, you're fine. And no, you don't need to buy expensive gold-plated HDMI cables to get a better picture - just the Amazon Basics will do just fine.

FAQ

What size TV is best for gaming?

The right size of TV fully depends on your specific setup. For instance, if you're planning on kicking back around 3 meters away on the couch, you'll benefit from a larger screen. If you're looking for something for the bedroom or to sit on a desk as a monitor alternative, you'll be able to shrink things down and sit a bit closer. Just keep in mind that the closer you sit to a screen, the more likely you are to notice softness with lower resolutions, especially if you're using a 1080p panel.

What TV screen type is best for gaming?

Generally speaking, investing in different screen types like OLED or mini LED will give your gaming visuals a glow up. However, it's important not to forget about fidelity and speed, as while different panels will offer up better contrast and colors compared to traditional LED screens, specs like a slower refresh rate and response time could affect how your favorite games feel to control. Striking a balance is key, and taking into account your favorite genres is a must.

Is QLED or OLED TV better for gaming?

LED and OLED screens boast different qualities that both enhance gaming experiences. Typically, QLED tech can produce brighter results, where as OLED can achieve deeper blacks, better contrast, and true to life colors. That said, OLED screens also come with the risk of burn in, and games feature static HUDs and elements that may affect your panel's longevity if left on display for extended periods. While modern models come with preventive features, it's still worth considering when choosing between QLED and OLED.

How to choose a gaming TV

It's easy to simply splash out on the most expensive gaming TV out there, but there's an optimal way to pick up the perfect panel for your needs. Largely, you'll want to drill down on the kind of games you play and the visuals you value during your favorite outings, as not everyone needs the same level of specs or specific features.

For example, if you've now got a P55 Pro nestled within your TV bench, chances are you'll be looking for top specs. Ideally, you'll want something larger so that you can see Sony's new visual enhancements, like improved ray tracing and texture resolutions. Opting for a newer panel type mini LED, QLED, or OLED will be beneficial too, as it'll help highlight the extra layer of fidelity now available at 4K.

Some of you might already be ahead of the curve with a living room PC, and players with a rig next to their TV will want to consider refresh rate. Ideally, you'll want to go for an 144Hz panel, especially if you like playing high frame rate shooters, meaning you can balance price by investing in a mini LED screen that can manage over 120Hz. The new LG OLED G4 can even hit 165Hz, and even some gaming monitors out there still aren't able to hit that figure at 4K.

On the flipside, if you're using a Nintendo Switch or even a gaming handheld like the Steam Deck, you'll get on just fine with a cheaper LED TV. Aiming for faster refresh rates might not be necessary either, as while 144Hz screens are becoming a norm, even 120Hz is overkill for systems that can only manage 60Hz. We're already at a stage where the latter is reserved for the cheapest panels out there, and while there's a case to be made for picking up a budget screen for some setups, you'll want to think about whether spending a bit more could come with some worthwhile benefits.

OLED screens are considered cream of the crop, but there are a few reasons to go with mini LED or QLED instead. Chances are if you've ever went hunting for a TV you've encountered the phrase screen burn, and while the affliction can occur on most screen types, organic LED panels are more susceptible. Modern models come with measures built in to combat the issue, so it's not a guaranteed risk. But, if you do play games with a static HUD or tend to leave your screen on a lot without much motion, it could effect the lifespan of your panel.

Ultimately, scaling TV specs with your specific hardware is key, and will help you either save money or invest in the right specs. If you happen to have the funds, simply picking up the absolutely best gaming screen out there will eliminate all caveats, but it will cost you extra and come with a bunch of feature you won't necessaily use.

How we test gaming TVs at GamesRadar+

At GamesRadar+, we like to test TVs with gaming as a core focus. Our savvy team of experts all love diving into new adventures on consoles like the PS5, Xbox Series X, and Nintendo Switch, and have years of experience to help them sniff out the best panels in terms of performance, features, and price.

Our review process normally kicks off with the unboxing and setup experience. Impressions straight out of the box can make a huge impact, and assessing aspects like design and assembly are key. While most modern displays don't require a lot of steps to get up and running, fiddly feet and mounting systems absolutely matter, and can serve as a barrier to playing games swiftly on your new screen.

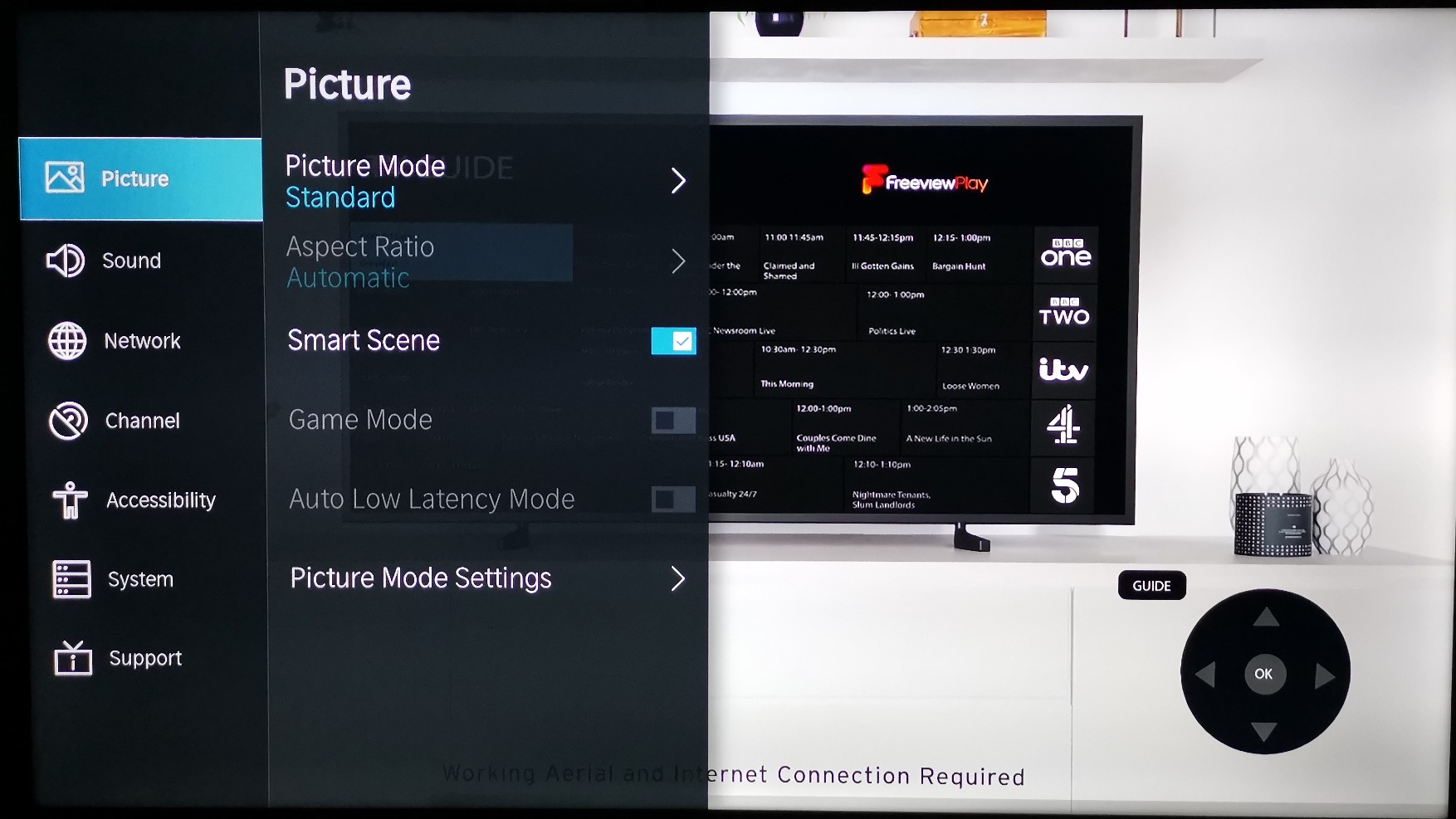

Once setup, we'll go over the TVs software and default features, keeping an eye out for any quirks or elements that could disrupt your gameplay sessions. We'll often judge a displays UI and whether it comes with easy to access settings at this stage, in addition to whether it has a robust home screen with streaming apps and game mode options.

Naturally, plugging in consoles and gaming systems is one of the most important part of the review process. Regardless of a TVs specs, we'll test using the latest hardware and HDMI 2.1 connectivity, as this will let us determine performance capabilities and refresh rate options. Typically, we'll use games that can better highlight these elements too, with an example being Overwatch 2 on PS5 to test maximum refresh rate and responsiveness.

In addition to in-game refresh rate tests, we will also step back and evaluate things like brightness and contrast with gaming in mind. That includes jumping into specific outings that feature darker visuals as well as enabling HDR where compatible to see if the experience is enhanced.

When passing a final verdict and score, we will take how each TV faired during testing and align it with factors like price. That way, we'll be able to better determine whether a display is good value for money or justifies its elevated price point. Each model will target a different player type, and taking all context and use cases into consideration is key at this stage.

For more information, check out our full Hardware Policy for a rundown on how we approach all of our reviews and testing.

Oh, and if you're on the lookout for something truly massive, you might want to consider one of the best projectors, best projectors for PS5 and Xbox Series X, or best 4K projector instead.

Weekly digests, tales from the communities you love, and more

I’m your friendly neighbourhood Hardware Editor at GamesRadar, and it’s my job to make sure you can kick butt in all your favourite games using the best gaming hardware, whether you’re a sucker for handhelds like the Steam Deck and Nintendo Switch 2 or a hardcore gaming PC enthusiast.