Why 60 frames per second should be the standard for next-gen

We've got the chance to set the standard high. Let's do it right!

Weekly digests, tales from the communities you love, and more

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

"30fps, I think, is a clearly established standard and is now the minimum acceptable standard, and it will only go up from there. As we hit the next gen, God help us, it might become 60!"

Those are the words of Capcom’s Alex Jones, Producer of DmC: Devil May Cry. The still-beautiful game that controversially took the series away from 60 frames per second and down to 30 (albeit with some alleged fancy visual tricks to hide the fact). Why controversially? Because 60fps really matters to some gamers. Big time. In fact, with next-gen just around the corner, I think Alex is right to suggest it's time to move up. 60fps should be the standard for next-gen games. Because it makes a huge difference in so many ways. Let's take a closer look...

Contrary to what your mind is constantly telling you, TV screens don’t display moving images. They display a rapid succession of still images to trick your mind into thinking you’re watching moving images. It’s a small but important difference. Although perhaps ‘trick’ isn’t the right word. So eager are our brains to make sense of what they’re shown, they’ll happily fool themselves, filling in countless gaps between images and allowing us to suspend our disbelief so we can watch a film or play a game.

Article continues belowBut the rate of good information has to be quick. Because the fewer still frames you are shown every second, the less convinced your brain is by the movement it’s seeing. The game or film will start to look less fluid, then decidedly jerky, before your mind gives up trying to recognise movement and dismisses what it’s seeing as a mere collection of different images. For example, look at Team Fortress 2 running at 1fps:

It's a slideshow. But I bet you still imagined movement, even then, didn’t you? You were linking each frame to the next like a storyboard, imagining the reloading animation and the character's movement across the room. That's how keen your mind is to make sense of what it's seeing. But there's only so much it can do with such limited information. 10 frames per second is about the limit for lifelike images to appear to move properly, although let's face it: Video games need to be quicker than that.

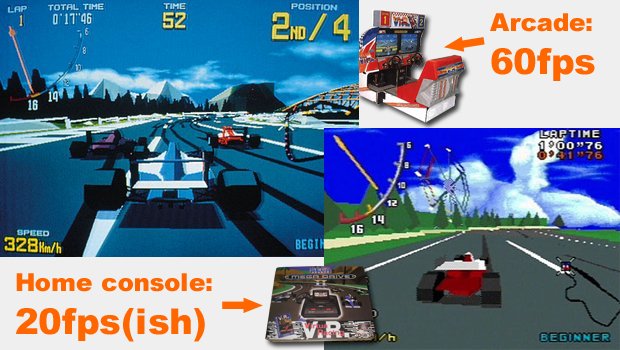

So how fast should games be? We've had everything from perhaps the worst frame-rate in any game ever through to Quake being run at thousands of frames per second. Ironically, the 16-bit era was a golden age for 60fps games, with everything from Sonic and Mario through to Pac-Man and Zelda running at 60fps. But then, when 3D came along in the arcades (running at a beautiful 60fps), it all slipped on home consoles because the processing load was so high.

Games like Soul Blade on PS1, GoldenEye on N64 and even the original Gran Turismo all ran at 30fps or lower. It was a frustrating time. But no coincidence that these games also pushed the boundaries of what was possible on home machines. The rule is simple: The more you stretch the hardware, the more the frame-rate takes a hit.

Weekly digests, tales from the communities you love, and more

Growing up with such games is undoubtedly the reason why gamers like me can 'see through the Matrix'. We got used to championing games with good frame-rates because there simply weren't that many of them around. The ones we had were fuel for debates about which console was best. But after the 32-bit era ended, I'll never forget my intense disappointment when I played Sega Rally 2 on Dreamcast and the frame-rate dipped to 30fps at the first corner, proceeding to fluctuate between arcade-perfect 60fps and 'last-gen' 30fps for the entirety of the game. That wasn't good enough then, it still happens now and it needs to be eliminated. Completely.

Justin was a GamesRadar+ staffer for 10 years but is now a freelance writer, musician and videographer. He's big on retro, Sega and racing games (especially retro Sega racing games) and currently also writes for Play Magazine, Traxion.gg, PC Gamer and TopTenReviews, as well as running his own YouTube channel. Having learned to love all platforms equally after Sega left the hardware industry (sniff), his favourite games include Christmas NiGHTS into Dreams, Zelda BotW, Sea of Thieves, Sega Rally Championship and Treasure Island Dizzy.

Join The Community

Join The Community