Color commentary

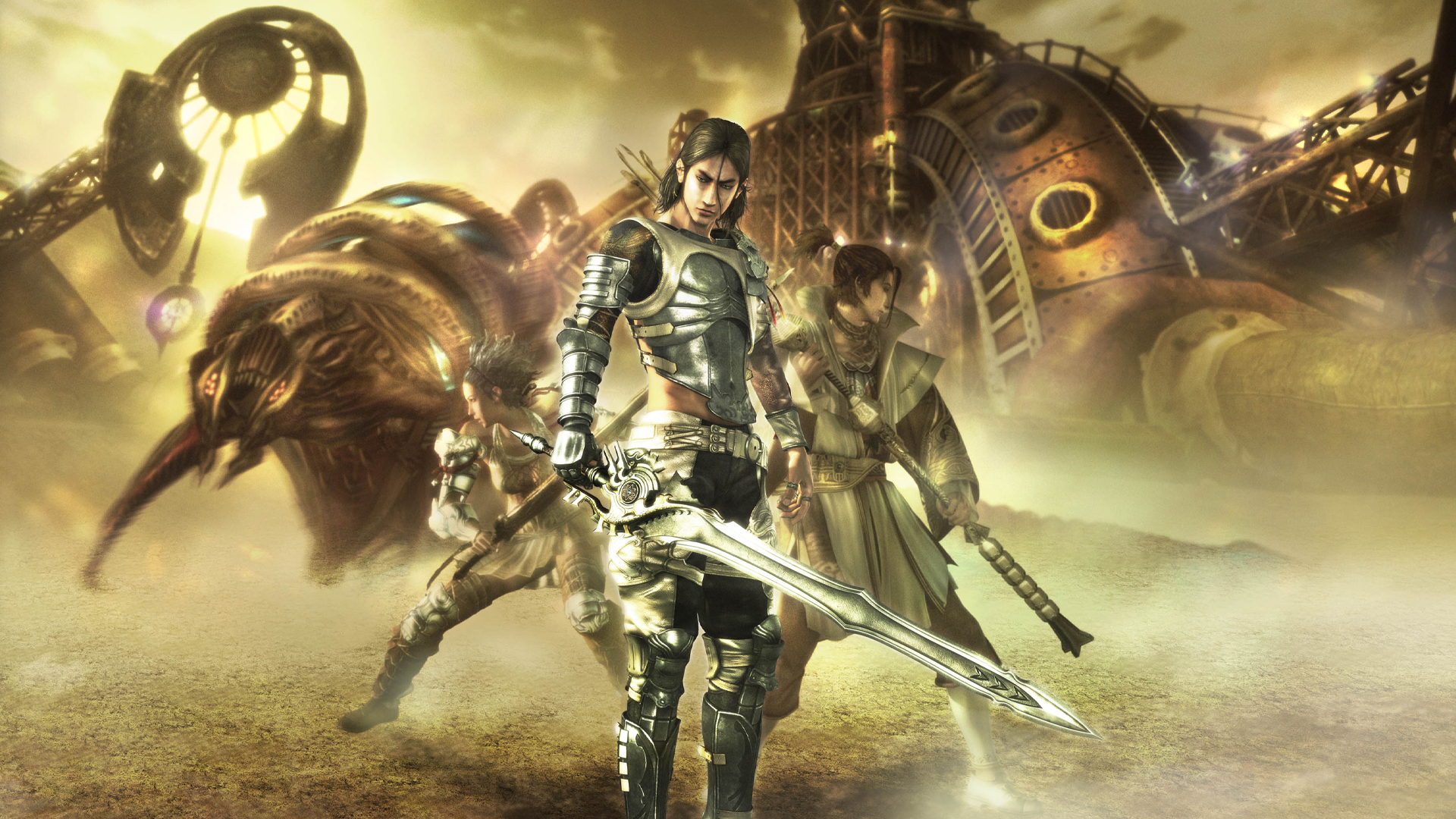

Even as technology progressed, the need for this kind of mental agility was still required for as long as environments were 2D (SNES) or viewed through eyepoke-o-vision (N64). But the closer we get to photorealistic graphics (Nvidia think we're 10 years off, and they tend to know about such things), the less freedom the mind has to fill in the gaps. Indeed the brain, left otherwise redundant, turns its attentions to the detail that's missing rather than what's there.

Let's wheel in Glenn Entis, the chief technical officer at Electronic Arts, as Exhibit A. At the recent SIGGRAPH Conference, he contended that, "When a character's visual appearance creates the expectation of life and it falls short, your brain is going to reject that." He went on to wax lyrical about EA's motion-capturing techniques, and how they can be used to accurately measure a true-to-life gait, stance and movement of facial muscles in their character models.

Ignoring for a moment that a large chunk of developmental time is being wasted making sure the character's cheeks wobble properly, what's actually happening here is a calamitous role-reversal of developmental priorities. Where the best games have historically developed the two most fundamental aspects of a game (level design and character maneuverability) in tandem so they complement one another, in this scenario, the developers tie their metaphorical shoelaces together by sticking with the predetermined physics of real life.

Weekly digests, tales from the communities you love, and more

Join The Community

Join The Community