What's next? - Is force-feedback the missing link in motion gaming?

Let's pretend your idea of the perfect hands-free, motion-controlled game is made in the next few years. Maybe it's a shooter that uses Kinect 2.0 and has a miraculously intelligent control scheme that somehow functions well without a gamepad (work with us here, use your imagination). Maybe it's a fantasy game in which you can imitate the motion of swinging a sword to chop monsters in half. Or, maybe it's just another one of those goofy minigames where you swat at soccer balls as they launch toward the camera. Regardless of the type of game that comes to mind, the very nature of how you play it means it'll have one seemingly harmless but significant flaw: a lack of force feedback.

It's jarring to wave your hands through the air in an attempt to hit an on-screen object, only to receive no physical feedback upon doing so. You know you were successful because you can see the object react to your input, but your hands keep on moving as though nothing happened. So how do we bridge the disconnect between our actions and a game's response? According to Rajinder Sodhi, a PhD Candidate at the University of Illinois and lead researcher of Disney's Aireal project, the answer lies in haptics devices, which tap into your sense of touch.

"We've focused so much on visuals in the last few decades," Sodhi says. "The idea of using haptics...there's this rich space we haven't really touched on. It's one of the few areas of creating that extra level of immersion that's still untapped."

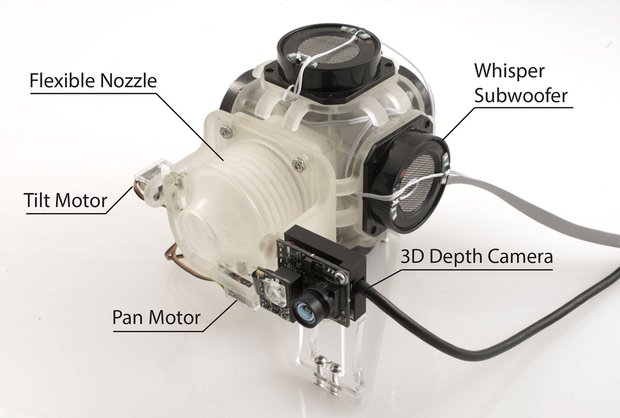

Take, for instance, the aforementioned Aireal program. Aireal is essentially a miniature, mountable cannon that blasts concentrated vortices of air at a user, thus providing a form of tactile feedback. When you successfully swat a soccer ball on the screen, Aireal hits the palm of your hand with a burst of air strong enough to trick your brain into thinking you actually hit a soccer ball.

What's more, Aerial can extend to services outside of gaming, including communications or other forms of entertainment. You can imagine how much scarier a horror film might become if a quick burst of air poked you at the exact second a monster came crawling out of the shadows. It also presents another exciting opportunity: "What happens when you're Skyping with someone, for example, and you can give each other high fives and feel that?" Sodhi suggests. "Or, if you want to convey emotions through remote communications somehow, can we relay some of that through haptics?"

You don't even have to wear any equipment for this to occur, a commonly overlooked obstacle that can have a negative impact on even the most exciting of technologies. As we mentioned in our piece on virtual reality, we know plenty of folks who can't be bothered to put on 3D glasses for their 3D TVs, and the challenge of getting people to suit up will undoubtedly be an obstacle the Oculus Rift virtual reality headset must overcome. Most people just prefer simplicity over complexity.

"Free-air haptics naturally fits in as a solution to that problem," Sodhi says. "You don't have to impede how the user naturally interacts with the world." Of course, having a physical connection with hands-free gaming doesn't mean you'll magically enjoy those experiences more if they're not your thing already, but it does bring us closer to a once-imaginary sci-fi future of gaming, one in which you'll be completely immersed in a virtual world that so closely resembles the real.

Weekly digests, tales from the communities you love, and more

"We always talk about, you know, are you going to be sitting on your couch and not wanting to move because you're so immersed in this virtual experience?" Sodhi says. "You're getting haptic feedback, you have your Oculus Rift on, or some other [head-mounted display], you're so immersed in this world--in 10-20 years I can definitely see new gaming experiences being that good."

Sure, the tech required to produce those experiences is still in its infancy, but we can't wait to see where it leads--we just hope it doesn't involve the swatting of soccer balls.

What's Next? is a bi-weekly column exploring the future of gaming tech.

Ryan was once the Executive Editor of GamesRadar, before moving into the world of games development. He worked as a Brand Manager at EA, and then at Bethesda Softworks, before moving to 2K. He briefly went back to EA and is now the Director of Global Marketing Strategy at 2K.