"It's just impossible": Devs explain why big online games always seem to break at launch

Developers from EA, Massive, and more explain why and how things go wrong

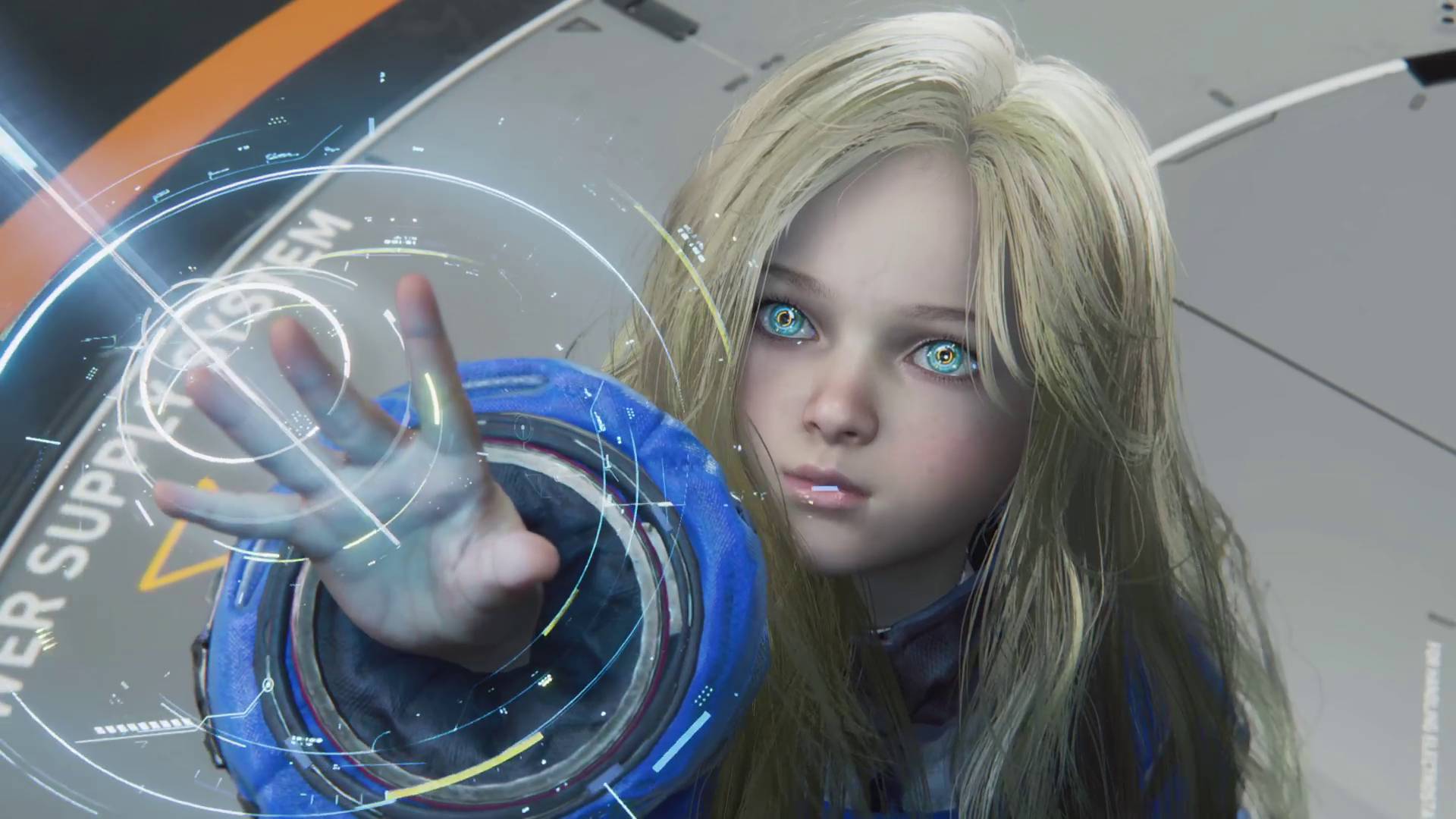

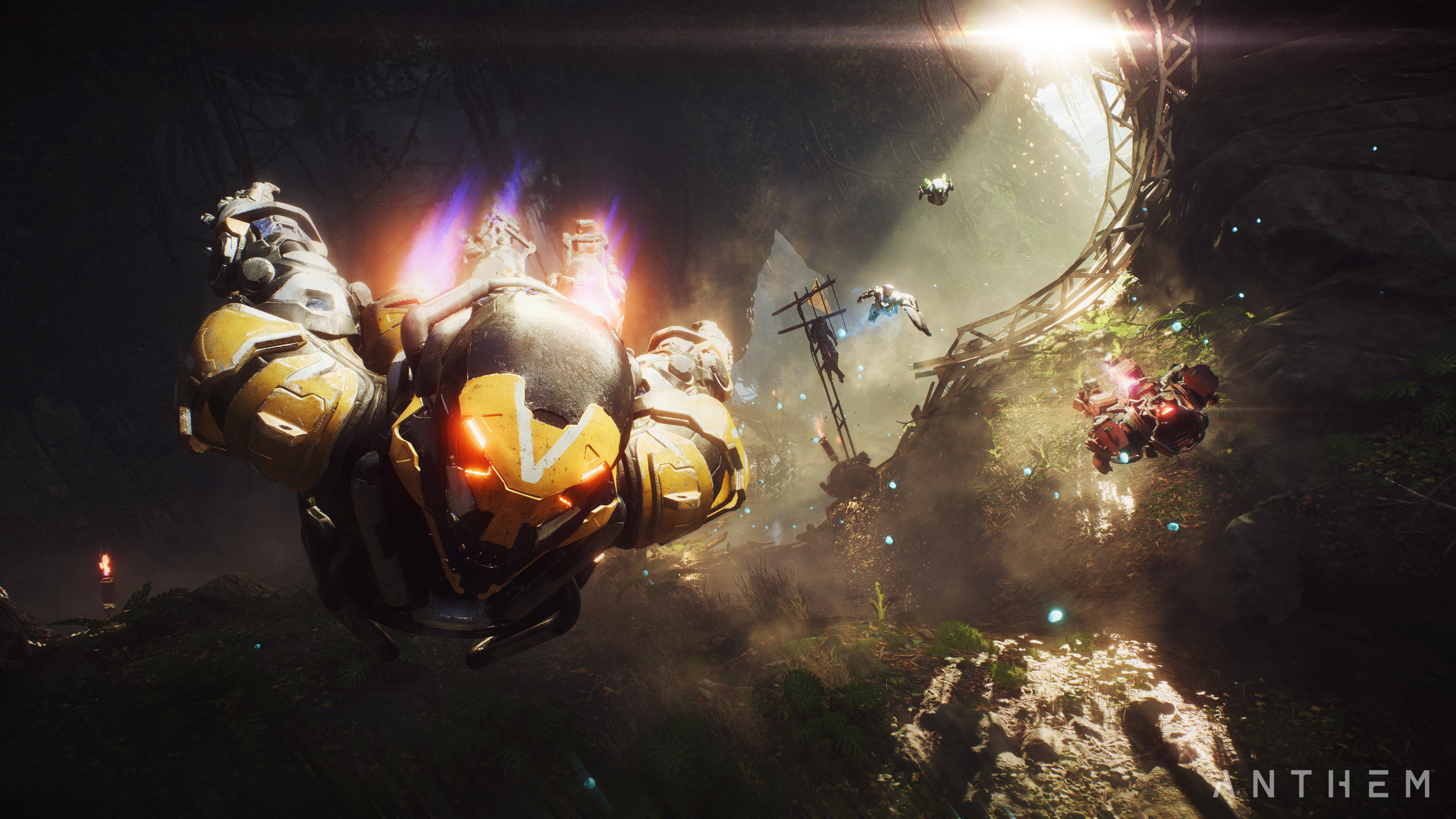

Between the fragmented launch of Anthem, the surprise launch of Apex Legends, and the upcoming launch of The Division 2, early 2019 is stacked with big online games. As BioWare’s latest, and countless games before it have demonstrated, multiplayer games of this scope rarely launch in a brilliant state. It seems like every online game has some technical issues at launch, whether they're minor ones, like the week-one bugs in Apex Legends, or game-breakers, like the connection issues that initially crippled Diablo 3.

We've had troubled launches for as long as we've had online games, but it feels like the conversation around launch issues hasn't really gone anywhere. We see the same questions pop up every time. Why did this happen? Why didn't the developers anticipate this? Why did it take so long to fix? With so many big online games being released so close together, with the rise of Games as a Service in the industry, now seemed like a good time to bring some of these questions to developers in the hopes of demystifying the dreaded launch day downtime. Why do we keep seeing the same issues when games launch, and how do developers handle them?

"Capacity is very rarely the problem"

Whenever games go down or are slow to connect, many assume that it's because they ran out of server space. That the developers underestimated how many players would log in and, as a result, their servers buckled under the strain. In that case, all they need to do is pay for more servers, right? Well, no, not necessarily; as is often the case when making games, it's not that simple.

Article continues below"One of the mindsets you see online a lot is, 'Why hasn't Company A got more servers?'" Alex Mann, a development manager and former QA analyst at EA, tells us. "At launch, you see the biggest amount of traffic on these games. Everybody's been hyped, the marketing team have done their job well, everyone's really excited to get online, and the moment that the game pops everyone's clicking ‘Go.' But you'll notice with the life cycle of most games, you have this massive burst and then it tails off. If every games company out there bought the hardware to cover everything they needed within that initial burst, two weeks later they'd have 50% of their hardware sitting there never used."

"That isn't about 'Let's throw loads of money at it and make it bigger.'"

Alex Mann

This makes it difficult for developers to prepare for launch without overspending and buying too many servers. Luckily, devs now have access to virtual servers through companies like Amazon Web Services, and these can be activated or deactivated as needed. These kinds of servers also became necessary as games shifted away from the peer-to-peer connections – the type that supported games like Halo 2 back in 2004 – to dedicated servers that uphold massive, persistent games like Destiny 2 and Anthem. However, virtual servers aren't a miracle cure, and they have problems of their own.

"Capacity isn't necessarily about the number of servers," says Mann. "Even if we're expecting millions of players and we've got the servers, we're not expecting them all to hit that login portal at the same time. It's about having enough lanes on the motorway for people to come through. You've got two countries connected via bridge, and both countries have tons of space on them, but to move from the client country to the server country, how big do you make that bridge? That isn't about 'Let's throw loads of money at it and make it bigger.' At the end of the day, it's often a bottleneck based on the technology and engine that you're using."

One misconception that Mann often sees has to do with how development teams operate - specifically, the idea that anyone can fix anything.

"When you're dealing with complex code bases spanning multiple files built by 200 people, if I look at my coders and they've built this, coder A doesn't know that entire project," he explains. "There's this concept that everybody knows everything about the game code, so the level artist should be helping with the fix for level architecture. You know, community managers don't fix bugs."

I heard the same thing from Fredrik Brönjemark, director of live services at Massive Entertainment, the studio behind The Division. "Large online games are extremely complex pieces of software, relying on a massive online server infrastructure to support it," explains Brönjemark. "On top of that you also have the additional layer of first-party services, so there are a lot of different ways things can go wrong! For us on The Division, the main types of incidents that could cause downtime or connectivity problems were instability in the game software running on the servers, or hosting provider issues. Running out of server capacity is very rarely the problem. On The Division 2, our servers scale automatically depending on the number of players wanting to play the game."

Weekly digests, tales from the communities you love, and more

Any number of things can go wrong on launch day, and more often than not, sheer player count is relatively low on the watch list. There could be a memory leak, a single but catastrophic line of incorrect code, or a spot of lag buried somewhere in the enormous server pipeline. A game might have an issue with a certain ISP, or as Brönjemark mentioned, the first-party services that a game relies on could go down. The problem could be anywhere, but no matter where it is, it's everybody's problem. Nobody is an island when it comes to online games, and that can make responding to issues incredibly difficult and time-consuming.

"Every launch is different"

All of the developers I spoke to described a similar triage process for fixing issues. Mann offered an overview of what a fix might look like from start to finish. First, a developer has to sift through the symptoms of the problem to identify the actual cause. Then they bring in the people who are responsible for that area of the game to work out a solution. Is it something they can update on their side, or do they need to issue a patch? Once they find a solution, they'll have to test it to make sure it doesn't break anything else, especially if it is a patch.

"There's a check before anything goes live," says Mann. "There's a lot of back-and-forth with platform holders [like Sony and Microsoft] to make sure we're working towards success together; we've got to go through QA steps. And as we're going through that patch, if we knee-jerk react and fix this now, but then half an hour later we have to do a second patch in the same day, it's going to be a mess. So we have to say, 'We're doing this patch; what other critical issues can we fix as part of this? What other stuff is wrong?' You can't just make a patch in half an hour. You've got to make sure you're being smart with how you're patching that content."

Once all that's done, if the universe allows, the devs can push the patch through and begin monitoring it and communicating its effects through their social channels. But "there's not a half-hour turn around," says Mann, adding, "there's maybe hundreds of people that will touch that before it goes out."

Frank Sanchez, a former BioWare and Gazillion Entertainment community representative with engineering experience, knows this paradigm well. As someone who's spent a lot of time collating responses and drafting patch notes, he's seen both sides of the update process, from player feedback to patch submission. He also knows better than most how complicated fixes can become, and how frustrating launch issues can be for players and developers alike.

"We are the last people that want to see a server go up and then two hours later lag so badly people can't log in," Sanchez explains. "I guarantee you if [the developers] bring a server up for a beta and it doesn't work right, that's probably at the tail end of somebody who put in time beyond what they were already crunching to get it to a state where it could launch. So when somebody online is like 'Well, they're just lazy,' that's completely and blatantly false. The work is put in, the challenge is how to respond to issues and communicate to players when they do happen. It's an imperfect science … every launch is different. Even if two games are developed on Unity or whatever, even if the genre is the same, the process is different. You can't say 'This game was fine, what's the problem with this game,' because there's a lot of uniqueness to every game."

You can't say 'This game was fine, what's the problem with this game,' because there's a lot of uniqueness to every game.

Frank Sanchez

Sanchez's comments touch on another common question that crops up around launch time: why didn't you anticipate this? Maybe game X ran into problems a few months ago. Surely the developers of game Y could see that and take measures to avoid those same problems, right?

Differences in individual games aside, everyone I spoke to said that some issues can't be anticipated. Internal testing can only do so much, and it can never truly compare to actually launching a game.

"There's just no simulation for live"

"You can't plan for live [concurrent players]," Sanchez continues. "It's just impossible. There is no substitute. I've seen every method of stress testing something internally before you put it out there, and there's just no simulation for live."

This is where pre-launch stress tests and beta periods come into play. They're not perfect, but they are the best way to gauge how a game's launch will look and what needs to be fixed before prime time. "Betas are massively helpful," says Mann. "You cannot get the size and scale that you do with a beta test internally. You just can't hire that many people to hit your servers. The best way to test live is by being live. If you look at a lot of alphas and betas, there's this concept that there aren't enough servers, that there are bugs and other issues, but within a week they've triaged and the latest or final release doesn't have those issues. That's only because it's experienced [live] and investigated during those betas."

"Recently, a studio ran a beta for its game and a bunch of my friends jumped in excited to play, and they hit a bug where they're stuck in the tutorial because a key item hasn't spawned in the server,” Mann tells me, noting how tricky it can be to anticipate flaws in an online game launch. "I guarantee in all the QA testing on that game, that item was always there. The only way you're going to find that is by testing this flow on a massive scale. I suspect those guys are now well aware of that and all over that issue to have it fixed for launch, all because of that beta work."

You can't fix everything

If betas are so great, why don't developers hold more of them, and why not hold them months before launch? As is often the case in games, technology and time don't always let devs do exactly what they want to do. Due to the way most games are made, they don't come together until right at the end, which is generally why betas appear so close to launch. And regardless of what devs learn from a beta, no matter what problems it may reveal, they can't realistically delay their game in response to them. A web service provider doesn't want a team to miss its server start date anymore than a publisher wants to miss their launch date. This is why, just as some issues can't be anticipated, some bugs just can't be fixed in time for launch.

The Division 2 beta schedule was fairly comprehensive, with private and open betas as well as a more targeted stress test. Not all games can swing that, but those that do benefit immensely from what Brönjemark calls the "final rehearsal." Its first open beta is planned for March 1 to 4, two weeks out from launch.

"I would love to ship with no bugs," considers Sanchez, a comment you’ll hear from any developer that has gone through hell and back to actually ship a product. "But any team will tell you that's very difficult to do. That's just the reality of it. The list of things that need to be fixed is ever-changing. You have to understand that when it comes to bugs, there are bugs that are potentially shipped, and there are bugs discovered after launch. All that has to get prioritized and planned against and talked about. It's triage. The luckier launches are the ones that have bugs but don't have crippling bugs."

On top of that, making a beta build can be a lengthy and labor-intensive task of its own. Developers can't just hack off a piece of their game and upload it to Xbox Live or the PlayStation Network. Betas are often developed separately from (but in tandem with) a game, which takes more time and money. This is why issues that have long since been fixed in the main build of a game may still be present in its beta. We saw this in Anthem's demos and in the latest beta for The Division 2, for instance.

"Quite often I hear people saying that they think beta tests are only marketing campaigns, and the developers can't learn anything from them anyway as the game is already finished at that point," Brönjemark tells me. "I'd like to dispel that myth. Even when the game is already printed on disc and the day-one patch is already done, there are still a tremendous amount of things that we are able to address server-side, both in terms of tech but also in terms of gameplay and balancing."

On the flip side, Sanchez says, "publishing schedules and game development timelines are very aggressive, sometimes too aggressive. When does something ship, how much funding do you have left, how long have you been in development. Sometimes the success of launching really depends on how many times you've had to push back your milestones, how many times did you delay your launch because you had something to polish. Some games can only be shipped with a certain amount of polish. You can't say it's completely and utterly fine once it goes gold. There are instances in which a game will ship in a state that's launch-ready, but there might be a little bit of polish that needs to be done."

A launch is more than day one

Developers and players both want their games to work perfectly the first time they fire them up, but the reality of game development is that there are so many moving parts and so many immovable limitations that some problems are bound to slip through the cracks, and the odds of that only increase as games get bigger and bigger. Sanchez reckons this is why we need to look at launches like these holistically. A game's performance on launch day is important, but it's not everything.

"It's not the issues, those are always going to happen," Sanchez says. "It's how you address those issues. If you're slow or you don't address them properly, or if you're hostile toward your players, it's going to stick with them. If there's one thing I wish players would understand, it's that issues do happen regardless of how well you plan for them. You should hold developers accountable for how they get responded to. If you have a problem a week into your launch, put their feet to the fire and say 'Hey, I'm not having a good experience, this is why, I'm concerned that these issues aren't fixed.' Those are the things we want to hear about."

Issues do happen regardless of how well you plan for them.

Frank Sanchez

No game launches perfectly. It just doesn't happen. As Sanchez puts it, "anything you would consider to be a smooth launch is just something that never rose to the level where a player perceived something was wrong." There's always scrambling going on behind the scenes. Mann described it as a bunch of developers huddled together in a "war room" watching a wall of monitors for feedback and potential issues. Sometimes they catch those issues early, sometimes they don't show up for a few hours, and sometimes they can't be fixed for a few more hours or even a few days.

The point is, massive online games are always going to have some technical issues at launch. Hell, all modern games have some issues at launch. That's just the nature of today's technology and today's industry. That doesn't mean players should blindly give a pass to games that are pushed out with catastrophic design or other issues, but it does put the average launch into perspective. A game might have minor issues we don't even notice or it may have obvious game-breakers. In any case, all anyone can do is hope for the best, prepare for the worst, and call out problems when they do inevitably surface.

Austin has been a game journalist for 12 years, having freelanced for the likes of PC Gamer, Eurogamer, IGN, Sports Illustrated, and more while finishing his journalism degree. He's been with GamesRadar+ since 2019. They've yet to realize his position is a cover for his career-spanning Destiny column, and he's kept the ruse going with a lot of news and the occasional feature, all while playing as many roguelikes as possible.

Join The Community

Join The Community